A recurrent state satisfies

A recurrent state satisfies

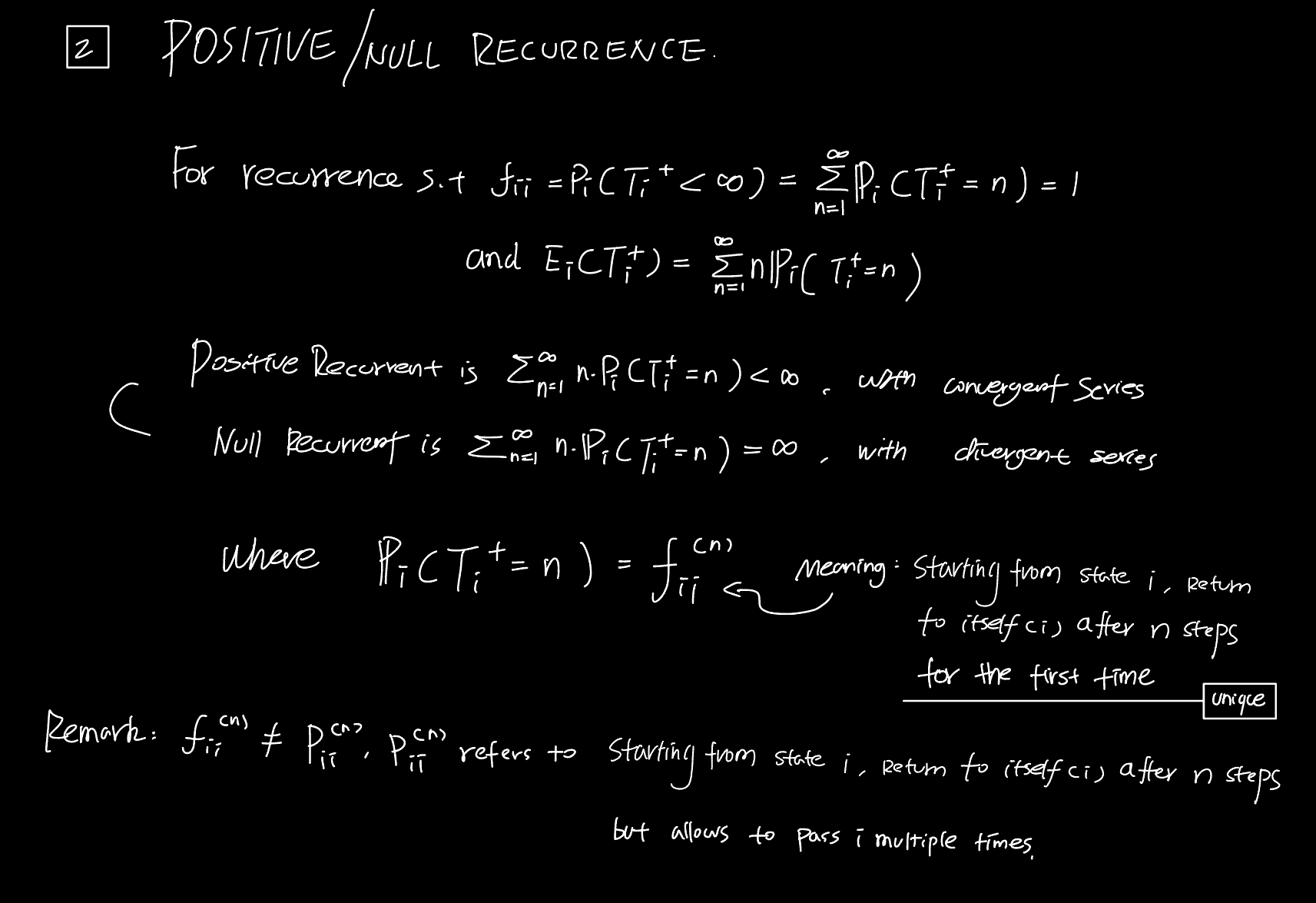

It is further classified by the expectation of the first return time $T_i^+$:

Positive recurrent:

$$ \mathbb{E}_i[T_i^+] < \infty $$Equivalently,

$$ \sum_{n=1}^{\infty} n \cdot \mathbb{P}_i(T_i^+ = n) $$converges.

Here, $\mathbb{P}_i(T_i^+ = n)$ could be further expanded using f-expansion, first step Analysis. Handwritten notes above have some directions for further investigation.

Null recurrent:

$$ \mathbb{E}_i[T_i^+] = \infty $$Equivalently,

$$ \sum_{n=1}^{\infty} n \cdot \mathbb{P}_i(T_i^+ = n) $$diverges.

A null recurrent state still returns with probability $1$, but its expected return time is infinite.

Fundamental Theorem of Markov Chain - Lemma Form

For an irreducible Markov chain,

$$ \text{there exists a stationary distribution} \iff \text{the chain is positive recurrent}. $$When the chain is positive recurrent, the stationary distribution is unique and satisfies

$$ \pi_i = \frac{1}{\mathbb{E}_i[T_i^+]}. $$Fundamental Theorem of Markov Chain

Examples

Positive recurrent: In Midterm 1 Q5, the unique stationary distribution satisfies

$$ \pi_0 = \frac{1}{4}. $$Hence,

$$ \mathbb{E}_0[T_0^+] = 4 < \infty. $$Null recurrent: The 1-D simple random walk satisfies

$$ f_{ii} = 1, $$but it does not admit a stationary distribution. Therefore,

$$ \mathbb{E}_i[T_i^+] = \infty. $$

Question 5