Setup

Task:

input $x \in \mathbb{R}^2$

output $\hat{y} \in \mathbb{R}^1$

Structure:

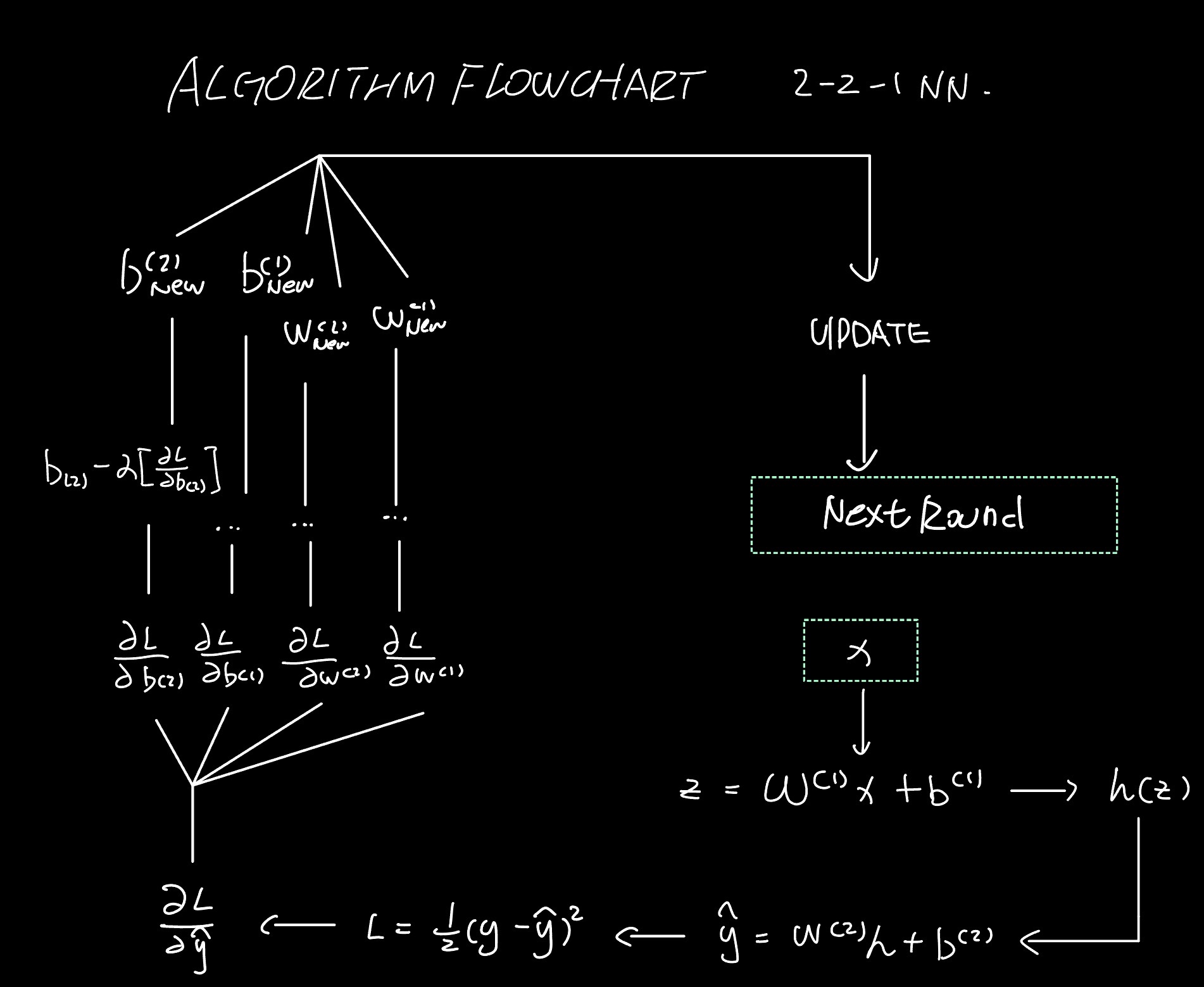

$$h = \text{ReLU}(W^{(1)}x + b^{(1)})$$$$\hat{y} = W^{(2)}h + b^{(2)}$$Parameter Dimensionality:

$W^{(1)} \in \mathbb{R}^{2 \times 2}$, $b^{(1)} \in \mathbb{R}^2$

$W^{(2)} \in \mathbb{R}^{1 \times 2}$, $b^{(2)} \in \mathbb{R}$

Loss Function:

$$L = \frac{1}{2}(\hat{y} - y)^2$$Phase 0: Init.

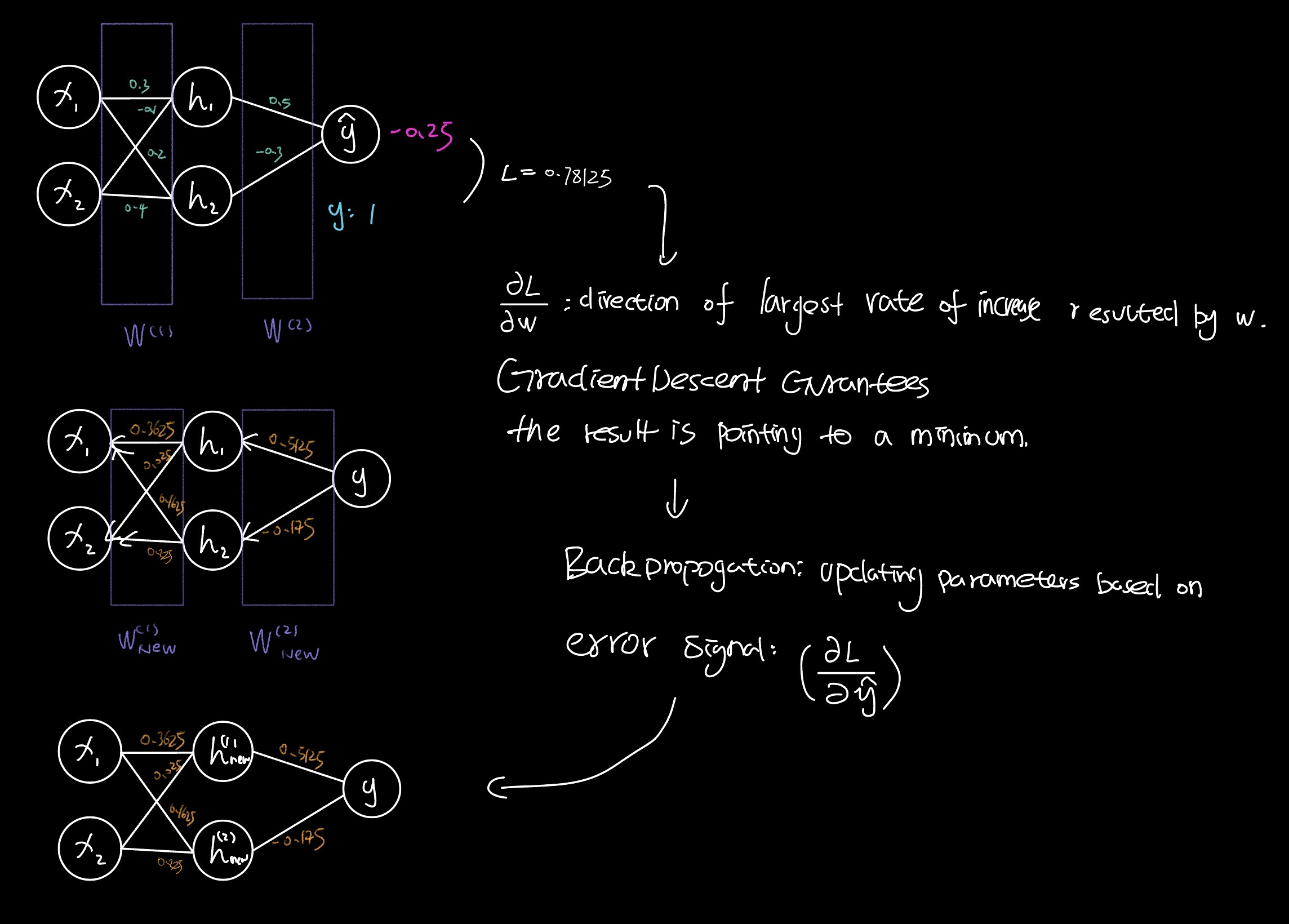

$$W^{(1)} = \begin{bmatrix} 0.3 & -0.1 \\ 0.2 & 0.4 \end{bmatrix} \quad b^{(1)} = \begin{bmatrix} 0 \\ 0 \end{bmatrix}$$$$W^{(2)} = \begin{pmatrix} 0.5 & -0.3 \end{pmatrix} \quad b^{(2)} = 0$$Phase 1: Forward Pass.

input $x = \begin{pmatrix} 1 \\ 2 \end{pmatrix} \quad y = 1$

1.

$$z^{(1)} = W^{(1)}x + b^{(1)} = \begin{pmatrix} 0.3 & -0.1 \\ 0.2 & 0.4 \end{pmatrix} \begin{pmatrix} 1 \\ 2 \end{pmatrix} = \begin{pmatrix} 0.1 \\ 1.0 \end{pmatrix}$$2. Activation

$$h = \text{ReLU}(z^{(1)}) = \begin{pmatrix} \max(0, 0.1) \\ \max(0, 1.0) \end{pmatrix} = \begin{pmatrix} 0.1 \\ 1.0 \end{pmatrix}$$3. Output

$$\hat{y} = W^{(2)}h + b^{(2)} = \begin{pmatrix} 0.5 & -0.3 \end{pmatrix} \begin{pmatrix} 0.1 \\ 1.0 \end{pmatrix} + 0 = 0.05 + (-0.3) = -0.25$$4. Loss

$$L = \frac{1}{2}(\hat{y} - y)^2 = \frac{1}{2}(-0.25 - 1)^2 = \frac{1}{2}(-1.25)^2 = 0.78125$$Phase 2: Back propagation.

$$\frac{\partial L}{\partial \hat{y}} = (\hat{y} - y)(\frac{1}{2})(2) = \hat{y} - y = -1.25 \quad \text{: Error signal.}$$$$\frac{\partial L}{\partial W^{(2)}} = \frac{\partial L}{\partial \hat{y}} h^T = -1.25 \begin{pmatrix} 0.1 & 1.0 \end{pmatrix} = \begin{pmatrix} -0.125 & -1.25 \end{pmatrix}$$$$L = \frac{1}{2}(\hat{y} - y)^2 \quad \Rightarrow \quad \frac{\partial L}{\partial b^{(2)}}$$$$\frac{\partial L}{\partial b^{(2)}} = \frac{\partial L}{\partial \hat{y}} \cdot \frac{\partial \hat{y}}{\partial b^{(2)}} = (\hat{y} - y)(1) = \hat{y} - y = -1.25$$Step 3. Error signal $\rightarrow$ Hidden Layer.

$$\hat{y} = W^{(2)}h + b^{(2)} \quad \Rightarrow$$$$L = \frac{1}{2}(\hat{y} - y)^2 \quad \Rightarrow$$$$\frac{\partial L}{\partial h} = \frac{\partial L}{\partial \hat{y}} \frac{\partial \hat{y}}{\partial h} = (W^{(2)})^T \frac{\partial L}{\partial \hat{y}} = \begin{pmatrix} 0.5 \\ -0.3 \end{pmatrix} (-1.25) = \begin{pmatrix} -0.625 \\ 0.375 \end{pmatrix}$$Step 4. ReLU

$$\frac{\partial L}{\partial z^{(1)}} = \frac{\partial L}{\partial h} \odot \text{ReLU}'(z^{(1)})$$$$\frac{\partial L}{\partial \hat{y}} \frac{\partial \hat{y}}{\partial h} \frac{\partial h}{\partial z} \quad h = \text{ReLU}(\underbrace{W^{(1)}x + b^{(1)}}_{z})$$$$z^{(1)} = W^{(1)}x + b^{(1)} = \begin{pmatrix} 0.3 & -0.1 \\ 0.2 & 0.4 \end{pmatrix} \begin{pmatrix} 1 \\ 2 \end{pmatrix} = \begin{pmatrix} 0.1 \\ 1.0 \end{pmatrix} \quad \text{, positive}$$For $\text{ReLU}' = \max(0, 1)$ (As $\text{ReLU} = \max(0, x)$)

$$\hookrightarrow \text{ReLU}'(z^{(1)}) = \begin{bmatrix} 1 \\ 1 \end{bmatrix}$$$$\frac{\partial L}{\partial z^{(1)}} = \frac{\partial L}{\partial \hat{y}} \frac{\partial \hat{y}}{\partial h} \frac{\partial h}{\partial z^{(1)}} = \frac{\partial L}{\partial h} \odot \frac{\partial h}{\partial z^{(1)}} = \begin{bmatrix} -0.625 \\ 0.375 \end{bmatrix}$$Step 5.

$$\frac{\partial L}{\partial W^{(1)}} = \frac{\partial L}{\partial z^{(1)}} x^T = \begin{pmatrix} -0.625 \\ 0.375 \end{pmatrix} \begin{pmatrix} 1 & 2 \end{pmatrix} \quad \text{(OUTER PRODUCT)}$$$$= \begin{pmatrix} -0.625 \cdot 1 & -0.625 \cdot 2 \\ 0.375 \cdot 1 & 0.375 \cdot 2 \end{pmatrix}$$$$\frac{\partial L}{\partial b^{(1)}} = \frac{\partial L}{\partial z^{(1)}} = \begin{bmatrix} -0.625 \\ 0.375 \end{bmatrix}$$PHASE 3. Parameter Update.

Gradient Descent:

$$\theta_{\text{new}} = \theta_{\text{old}} - \alpha \nabla \theta$$INIT $\rightarrow$ 1st

$$W^{(1)} = \begin{bmatrix} 0.3 & -0.1 \\ 0.2 & 0.4 \end{bmatrix} \quad b^{(1)} = \begin{bmatrix} 0 \\ 0 \end{bmatrix} \quad W^{(2)} = \begin{pmatrix} 0.5 & -0.3 \end{pmatrix} \quad b^{(2)} = 0$$Take $\alpha = 0.1$

$$W^{(2)}_{\text{new}} = W^{(2)} - \alpha \begin{bmatrix} -0.125 \\ -1.25 \end{bmatrix}^T = \begin{pmatrix} 0.5125 & -0.175 \end{pmatrix}$$$$W^{(1)}_{\text{new}} = W^{(1)} - \alpha \begin{pmatrix} -0.625 & -0.625 \cdot 2 \\ 0.375 & 0.375 \cdot 2 \end{pmatrix} = \begin{pmatrix} 0.3625 & 0.025 \\ 0.1625 & 0.325 \end{pmatrix}$$$$b^{(2)}_{\text{new}} = b^{(2)} - \alpha \frac{\partial L}{\partial b^{(2)}} = 0 - (0.1)(-1.25)$$$$b^{(1)}_{\text{new}} = b^{(1)} - \alpha \frac{\partial L}{\partial b^{(1)}} = \begin{bmatrix} 0 \\ 0 \end{bmatrix} - 0.1 \begin{bmatrix} -0.625 \\ 0.375 \end{bmatrix} = \begin{bmatrix} 0.0625 \\ -0.0375 \end{bmatrix}$$

Graphic Demonstrations